It’s been long overdue, and also, long-in-the-works: no one has quite complemented, mimicked, or successfully copied the Jim Stroud recipe for candidate engagement. It’s something that I deeply admire. To produce engaging quality content is rather difficult. With all of the conversations around artificial intelligence (AI) and augmented reality (AR) at SourceCon, what would it be like if you could produce the same level of engagement that Stroud creates on YouTube? What if you could do it at the individual candidate level? Would you engage in that part of the conversation, a conversation around people’s workflows and their social apps, no matter whether it was LinkedIn, Snapchat, Instagram, Facebook, or YouTube?

Next month, you can be Stroud. For Free.

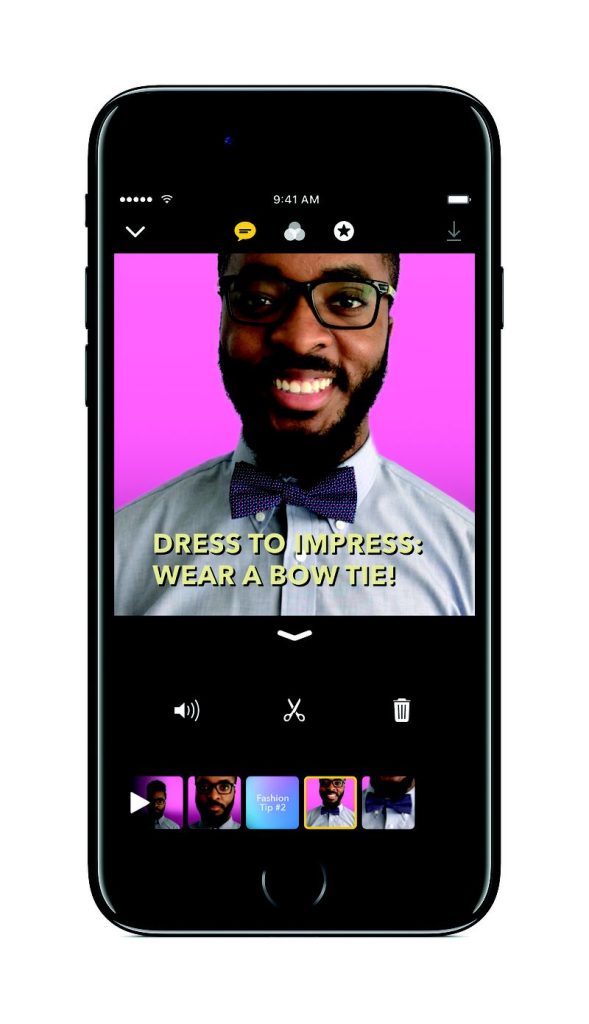

Rumored since late 2014, Apple’s been cooking up an iOS app that lets you mix of Snapchat and iMovie, but with AI and AR. Called Clips, it lets you share photos or videos up to 30 minutes in length with special filters and effects. As Apple’s first true native iOS video-editing experience, it’s intended to copy the success competing companies have had in creating a consumer-friendly video and photo-editing tools that have fun and transformative features like augmented reality filters and voice-changing capabilities, but, it’s not a Snapchat clone.

Rumored since late 2014, Apple’s been cooking up an iOS app that lets you mix of Snapchat and iMovie, but with AI and AR. Called Clips, it lets you share photos or videos up to 30 minutes in length with special filters and effects. As Apple’s first true native iOS video-editing experience, it’s intended to copy the success competing companies have had in creating a consumer-friendly video and photo-editing tools that have fun and transformative features like augmented reality filters and voice-changing capabilities, but, it’s not a Snapchat clone.

Yes, you can record a bunch of snippets and package them together in one video and then add music, text, filters, emojis, shapes, and more. Clips include a bunch of full-screen animated posters and backgrounds to choose from, as well and a library of music tracks that automatically adjust to fit the length of your video.

But that’s not what we are here for! Tell me how I can be Stroud!!!

https://www.instagram.com/p/BSKqOfABM3n/?taken-by=jimstroud

Where’s the AI?

Apple’s Clips app is imbued with its trifecta of artificial intelligence:

- Computer Vision: The app automatically recognizes faces using machine learning.

- Natural Language Processing (NLP): The app introduced Live Titles, an innovative voice-to-text feature that supports 36 languages, making it possible for users to create animated captions and titles for their content using just their voice.

NLP

A unique feature is Live Titles, which lets you impose text over a video clip using your voice. It also goes one crucial step further: Apple claims it synchronizes the text to the cadence of your voice. If it works as advertised, Clips’ speech-to-text feature will be the easiest method yet for close-captioning social videos. That makes Live Titles a neat solution to an ironic and increasingly irksome problem: The more people use video to communicate, the more they need text to tell them just what those videos are saying.

Text-on-screen has become a fundamental barrier to entry not just for media outlets and YouTube stars, but anyone who wants their social videos to be seen by as many eyeballs as possible. People are consuming more video content than ever (according to Facebook’s latest numbers, half a billion people watch 100-million hours of video on the platform every day); it stands to reason they’re watching more muted content than ever, too.

This is the means to get more out of our increasingly dull job postings. Even with original images and videos, the text remains essential to the narratives we all spin to engage with candidates.

One More Thing

If users post them to Apple’s Messages app, Apple will recommend whom to share it with based on which friends are in the videos and whom the user frequently contacts. This is the kind of predictive social features Facebook typically excels at. In Apple’s guidance and last quarterly earnings call, they did remind us that Messages is the most commonly used app for iOS devices, giving the company potentially up to 800 million users for its latest messaging platform. Snapchat, by contrast, has 161 million daily active users. While Apple’s Clips competitor will technically be a separate app from Messages, it will be tied closely to it for the ability share Clips videos with other Apple users.

While it sounds a lot like Snapchat, it’s also Apple’s first major foray into augmented reality, an area that the company has repeatedly signaled its interest in, and which might be the basis for our next way to engage candidates.

With the ability to connect and engage with candidates through personalized video, who will be the next Stroud?