Before we kick off some posts on some of the semantic search engines that are available out there, we felt it was important to break semantic web technology down a little bit and try to show how it actually works.

Some other folks in the sourcing world have written excellent posts on semantic search as it applies to recruiting, and rather than re-hashing what they’ve already so generously shared, we recommend reading these posts to get a good idea of how semantic search can be useful in recruiting:

- Why Is Semantic Search Important To Recruiters? – Shally Steckerl

- Semantic Search for Recruiting: Whitepaper – Bryan Starbuck and Shally Steckerl

- Semantic Search for Recruiters: Manual vs. Automated – Glen Cathey

- Navigating Semantic Search (slide deck) – Irina Shamaeva

- Semantic Search: Future or Hype? – Michael Marlatt

But how does the semantic web actually work? We’ll try to break this rather complicated process down…

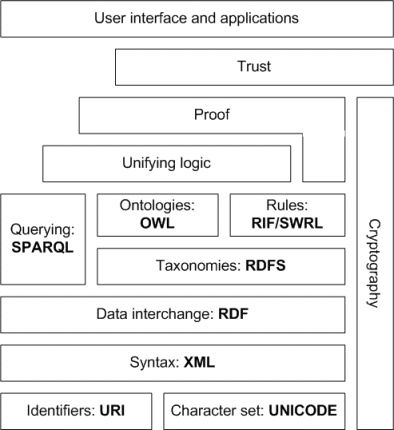

Let’s start with visualization. Here is the diagram of how the semantic web operates – also called the Semantic Web Stack:

Most of you are probably scratching your head at this point, saying, “I thought OWL was a type of bird.” So we’ll do our best to define these various components, with the help of The World Wide Web Consortium (W3C), Wikipedia, and a few other resources. There are specific groupings in this stack:

The Hypertext Web technologies, which are the basic foundations for semantic web:

- URI: Uniform Resource Identifier; AKA a term used to collectively refer to URLs, URNs, and URCs. In other words, the standardized method for designating WWW addresses.

- UNICODE: 16-bit code designed to represent the characters used in most of the world’s scripts. So, think of it like a universal computer language translator.

- XML: Extensible Markup Language; AKA a metalanguage for documents using tags to mark up elements. It is a set of rules for describing data in machine-readable format. Probably the most recognizable XML-based resource right now is RSS.

The Standardized Semantic Web technologies, which are the tools that have been accepted as standards for building semantic web applications:

- RDF: Resource Description Framework; a general method to decompose any type of knowledge into small pieces, with some rules about the semantics, or meaning, of those pieces. This would be sort of like a logic statement: if A has 1 and B has 3 and C has both A and B, then C has 4.

- RDFS: RDF Schema; helps extend RDF. It helps provide further clarification and/or classification of RDF definitions. Think of the way we classify all living things: kingdom, phylum, class, order, family, genus, and species.

- SPARQL: SPARQL Protocol and RDF Query Language (pronounced “sparkle”); it is an RDF query language, which is a computer language that is able to retrieve and manipulate data stored in RDF format. It is the standard query language for semantic search. Think of it as your personal favorite search engine.

- OWL: Ontology Web Language; a language to extend the expressible element that RDF provides. OWL adds an additional layer of semantics on top of RDF. The documents describe information in terms of classes, properties, individuals, and data values. So this is somewhat similar to RDFS – think fact-checkers here.

…and the Unrealized Semantic Web technologies, which include tools that are not standardized or are in conceptual status but are important to the functionality of the semantic web:

- RIF/SWRL: Semantic Web Rule Language; another language that combines a couple of different ontologies. More rules, more logic, more checks and balances. RIF (or Rule Interchange Format) is another rule layer.

- Cryptography: will help to ensure and verify that semantic web statements are coming from trusted source. This can be achieved by appropriate digital signature of RDF statements.

- Unifying Logic and Proof: actively researched areas of semantic technology, with no set standards yet; some companies are looking to automate these layers while others derive proof through human effort (employing individuals to develop proof or logic statements based on context)

- Trust: derived statements will be supported by verifying that the premises come from a trusted source (as also in cryptography) and by relying on formal (also unifying) logic during deriving new information.

- User interface: this is how we will be able to use the semantic web – these will be our search engines and other tools we use to retrieve information.

Makes perfect sense, doesn’t it? Well not quite – so here’s how we see it, in layperson terms:

Each layer is built up on the layers below it. So starting with the pre-established web addresses (of which each semantic search company may choose different sources), these become the knowledge base that make up the semantic web database.

UNICODE is worked in there for universal translation purposes so that the semantic technologies can read the various sites no matter how they’ve been written, and XML provides a way that computers can derive information about what is written and stored on the Internet (hence, syntax).

From there we get into the deciphering of information, which comes through the RDF technology – your basic logic statement machine.

However, as anyone who studied statistics or logic in high school knows, external factors can affect logic – these would be the surrounding words, phrases, and so forth that help in determining the actual meaning of the word or phrase in question. RDFS, SPARQL, OWL, and RIF/SWRL all work together in a series of checks and balances based on rules, variables, known facts, and assumptions. Sort of like a science fair project.

Factor in unifying logic and proof to ensure your “fuzzy logic” makes the most possible sense (that can be determined by a machine), then filter through some abstract ‘Trust technologies’, while all along being verified with RDF digital signatures through the Cryptology element, and out the user interface you go!

This, of course, is the highly non-technical depiction of how the semantic web basically works. Essentially, semantic web is like a plug-in to the natural web – it is not a replacement by any stretch of the imagination. It is a very sophisticated filtration system. And as such, semantic web tools can be developed for general search as well as proprietary use within an enterprise setting. We’ll explore a couple of these possibilities in the coming weeks.

We welcome anyone to contribute a more formal explanation than we’ve been able to provide. But hopefully this will give you a base understanding of the technology behind some of the search engines we’ll be outlining over the next month or two. We hope you will enjoy the SourceCon Semantic Search Series!