LinkedIn is inching toward 2 million user groups. Passionate people create these communities to engage people with common interests. Nearly half are “open groups” instead of “members only” groups which allows discussions to be indexed by search engines. We all know that users can only join up to 50 groups. If only we could track more groups to gain insight about active users, group owners, and influential participants. Here is a way to do that!

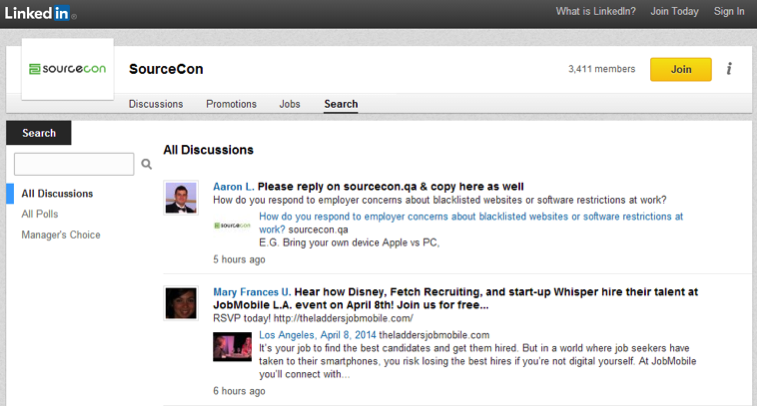

Since the Sourcecon Group is open, it can be viewed without logging into LinkedIn. You can even click through to links that re-direct you to the original content.

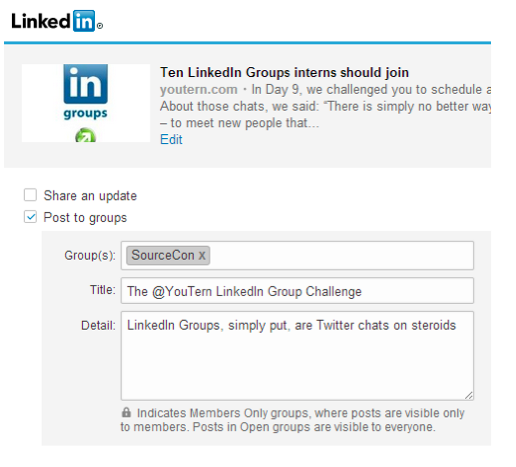

Viewing this page you will notice how some links have more context, images, and detail than others do. Those without titles and details (see image) were shared automatically or created natively by someone in a hurry. Now for the fun part!

Kimonolabs is an open beta web tool that makes scraping and crawling websites super easy. During Beta, all members have PRO level access so this is 100% free, and would cost $15/mo when they shift. After signup, you can use their awesome Bookmarklet (I can see Glenn Gutmacher’s smile from here) and start building without installing a thing.

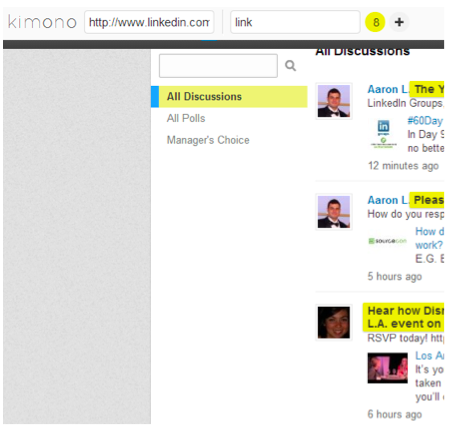

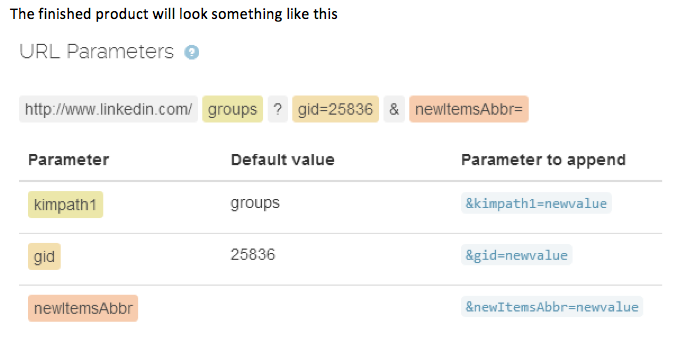

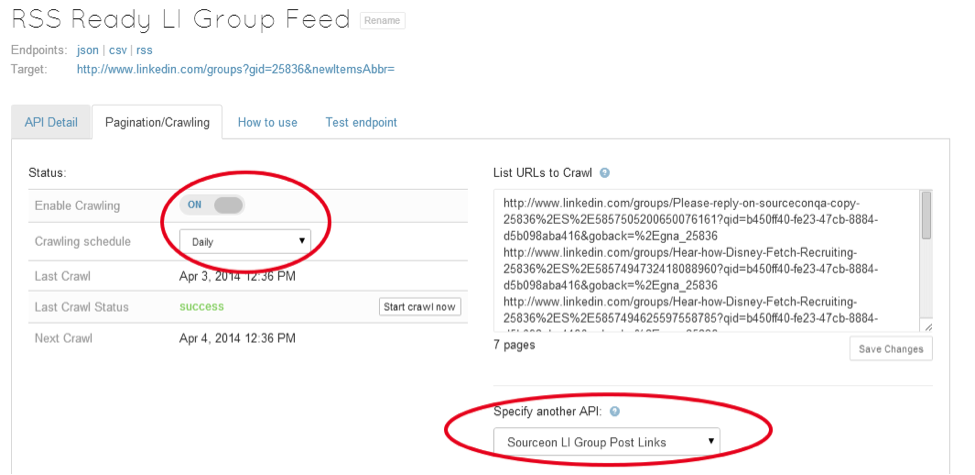

For this to work properly, we need to create two APIs; one to collect the links, and a second to crawl those links for the information we need. Using Kimono, you select the links that point to the actual posts and label them so the system can learn a pattern. They handle the rest on the backend, creating a API to GET only the links you need and curl/crawl/schedule future visits looking for new links.

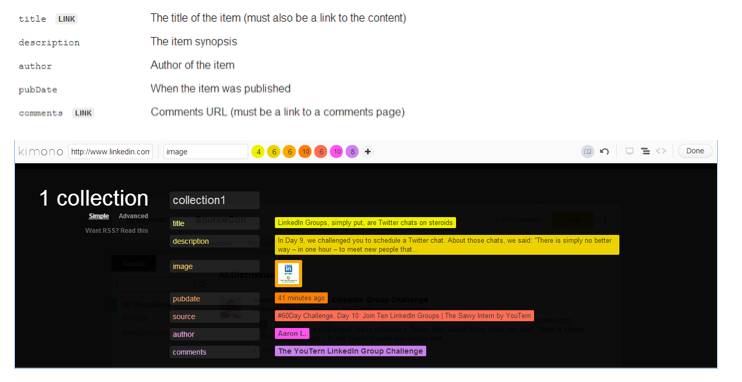

Now visit the public group site again and label the items we want to extract. To create a valid RSS feed, you need to include these specific labels and naming conventions.

Once done, view your new API to enable crawling using the first API as the source of links. You can schedule email updates to notify you each time an update is found on the “API details” tab. Data nerds will love the endpoint options of json, csv, or RSS. The API can also be called via curl, jquery, node, PHP, Python, Ruby libraries or via webhooks. That means you can integrate the data you collect into almost any existing database or CRM you wish. You can also build a sexy HTML5 webapp or embed it on a website widget.

With RSS, no tech skills are required. Anyone with MS Outlook has access to an RSS reader. Now do you get why RSS rocks? I can track comments and engagement in as many groups as I want. I just can’t participate in the conversation. As an added bonus, group owners can use this method to utilize their RSS feed to promote their group using tools like hootsuite to schedule updates to any social platform. LinkedIn loses nothing in this equation as we continue driving traffic to them (unless you just want to track the link source instead).

I’m sure many of you are wondering if Kimonolabs will scrape all websites, and the answer is no. If you need to login to view content, it will not work. It will work to track twitter and public posts on Facebook, Google plus, and most other websites.

image credit: bigstock